Market Overview:

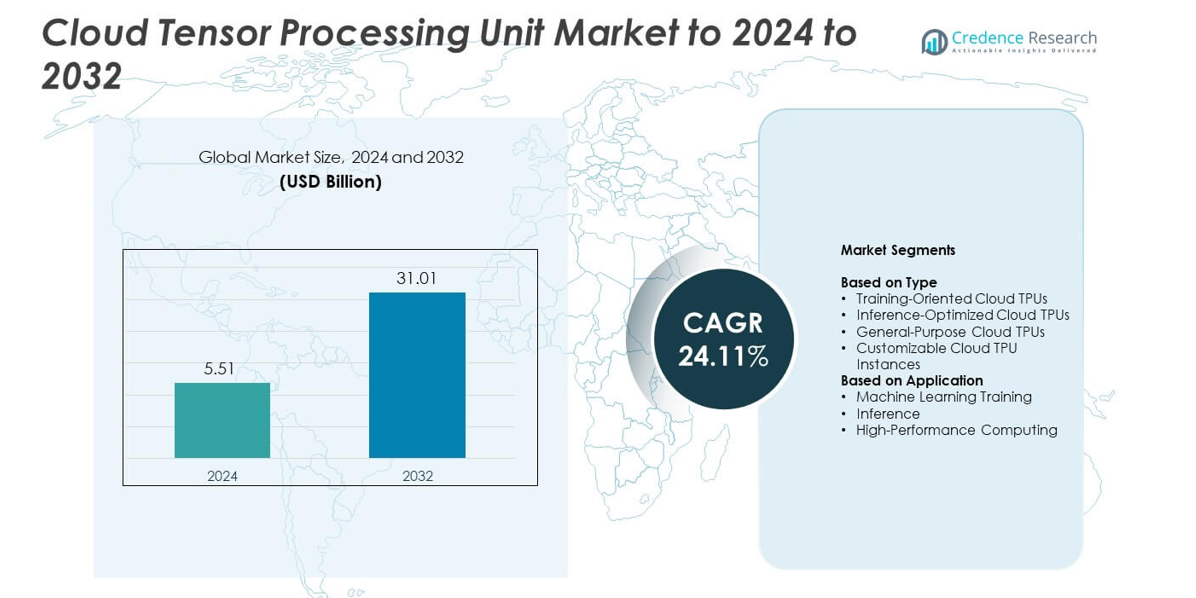

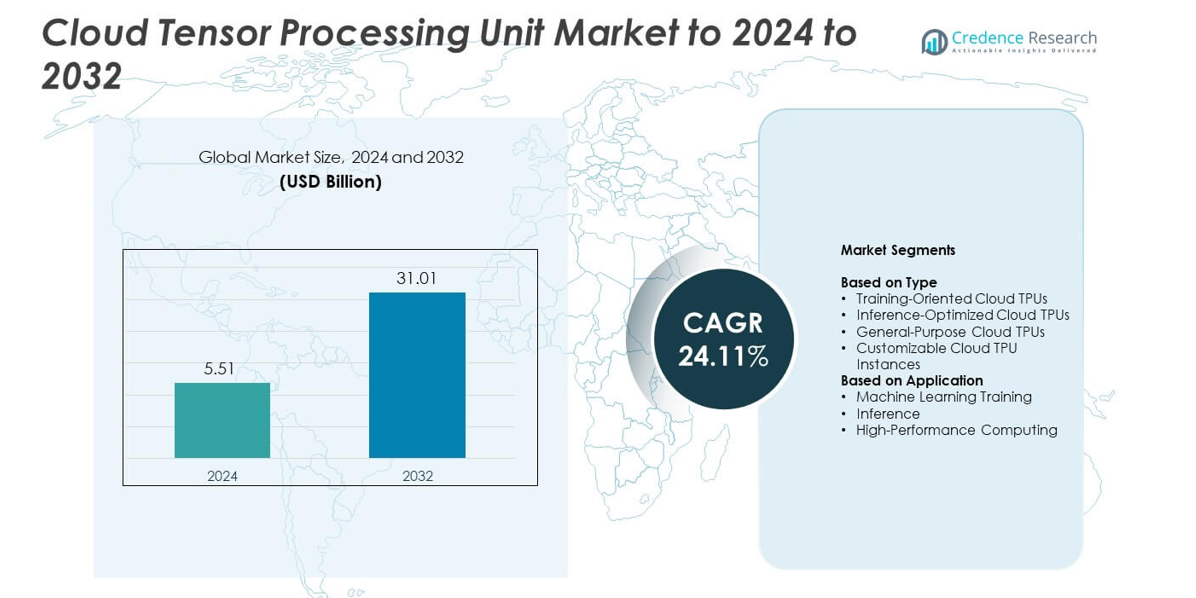

Cloud Tensor Processing Unit Market size was valued at USD 5.51 Billion in 2024 and is anticipated to reach USD 31.01 Billion by 2032, at a CAGR of 24.11% during the forecast period.

| REPORT ATTRIBUTE |

DETAILS |

| Historical Period |

2020-2023 |

| Base Year |

2024 |

| Forecast Period |

2025-2032 |

| Cloud Tensor Processing Unit Market Size 2024 |

USD 5.51 Billion |

| Cloud Tensor Processing Unit Market, CAGR |

24.11% |

| Cloud Tensor Processing Unit Market Size 2032 |

USD 31.01 Billion |

The Cloud Tensor Processing Unit Market includes leading providers such as Amazon Web Services (AWS), Oracle Cloud Infrastructure, Microsoft Azure, Alibaba Cloud, Google Cloud Platform, and IBM Cloud, each expanding advanced AI compute capabilities to support enterprise-scale model training and inference. These companies compete by enhancing TPU performance, reducing latency, and improving cost efficiency across cloud environments. North America dominated the market in 2024 with about 41% share due to strong AI adoption, extensive cloud infrastructure, and high investment in large-model development. Europe and Asia Pacific followed with growing demand from automation, analytics, and generative AI workloads.

Market Insights

- Cloud Tensor Processing Unit Market reached USD 5.51 Billion in 2024 and is projected to hit USD 31.01 Billion by 2032, growing at a CAGR of 24.11%.

- Strong demand for large-model training and scalable AI compute drives rapid adoption, with training-oriented cloud TPUs holding about 46% share due to heavy enterprise usage in deep learning workloads.

- Generative AI expansion and rising interest in energy-efficient accelerated computing shape key market trends, supported by growing preference for customizable TPU instances across industries.

- Competition intensifies as major cloud providers enhance TPU performance, optimize pricing models, and expand global data center networks to improve access and efficiency for AI developers.

- North America led the market in 2024 with around 41% share, followed by Europe at about 27% and Asia Pacific at roughly 24%, while Latin America and Middle East & Africa together accounted for nearly 8% as adoption continued to grow.

Access crucial information at unmatched prices!

Request your sample report today & start making informed decisions powered by Credence Research Inc.!

Download Sample

Market Segmentation Analysis:

By Type

Training-oriented cloud TPUs held the dominant position in 2024 with about 46% share due to strong use in large-scale model training across generative AI, speech models, and vision networks. These TPUs delivered high matrix throughput and supported parallelism for complex workloads. Inference-optimized TPUs expanded as companies scaled real-time AI services. General-purpose TPUs gained steady demand from cloud users seeking balanced performance. Customizable TPU instances grew as enterprises adopted flexible configurations for cost-efficient deployments.

- For instance, Google’s Cloud TPU v4 pod links 4,096 chips and delivers about 1.1 exaFLOPS of peak compute for large-scale model training.

By Application

Machine learning training led the segment in 2024 with nearly 57% share because enterprises used TPUs to accelerate deep learning workloads and reduce training time for large foundation models. Training tasks benefited from improved compute density and high-speed interconnects. Inference workloads increased as AI adoption in cloud services and automation grew. High-performance computing applications advanced as research groups and technical teams used TPUs for simulation, optimization, and advanced analytics.

- For instance, Amazon EC2 Inf2 instances use up to 12 Inferentia2 chips to provide around 2.3 petaFLOPS of BF16 or FP16 compute for low-latency inference.

Key Growth Drivers

Rising Adoption of Large AI Models

Demand for cloud TPUs grew as organizations trained larger and more complex AI models across language, vision, and multimodal tasks. These workloads required high compute density, low latency, and strong parallel processing, which TPUs delivered at scale. Enterprises shifted toward accelerated computing to improve model accuracy and reduce training cycles. This shift strengthened cloud TPU usage across tech, healthcare, finance, and retail, making advanced model training a major growth driver for the market.

- For instance, xAI operates the world’s largest operational AI supercomputer, the Colossus cluster, which uses an estimated 200,000 GPUs (a mix of H100 and H200 units) as of late 2024/early 2025.

Expansion of AI Integration Across Industries

Wider adoption of AI workloads across sectors increased TPU deployment in cloud environments. Companies relied on accelerated infrastructure to support applications such as predictive analytics, image recognition, autonomous decision-making, and personalized digital services. This growth pushed demand for scalable and cost-efficient TPU clusters that handled diverse enterprise workloads. Rising digital transformation strategies across global industries reinforced this expansion, making broad AI integration a key growth driver for cloud TPU demand.

- For instance, the Salesforce Einstein platform now delivers over 200 billion AI-powered predictions every day across all Salesforce products, including sales, service, marketing, and commerce clouds.

Growth in Cloud-Based AI Infrastructure Investments

Cloud providers expanded TPU availability to support rising enterprise migration toward scalable AI compute. Investments focused on high-efficiency TPU pods, energy-optimized architectures, and global data center expansion. These advancements provided stronger performance and lower operating costs for AI development teams. Increased cloud adoption created long-term demand for TPU-based compute resources, positioning infrastructure expansion as a strategic growth driver for the market.

Key Trends and Opportunities

Shift Toward Generative AI Workloads

Generative AI adoption accelerated TPU demand as enterprises built and deployed large-scale models for content creation, automation, and data augmentation. Cloud TPUs supported fast training cycles and efficient inference for generative systems. This trend opened opportunities for cloud providers to introduce specialized TPU versions optimized for large memory needs and distributed training. Strong enterprise interest in generative applications positioned this shift as a major trend and opportunity.

- For instance, SAP’s roadmap for SAP Business AI targets roughly 400 embedded AI use cases by 2025, building on more than 200 existing AI features across its enterprise portfolio.

Rising Demand for Energy-Efficient AI Compute

Energy efficiency became a priority as organizations sought lower operating costs and greener AI infrastructure. Cloud TPUs offered strong performance per watt, making them attractive for sustainable compute strategies. Providers introduced improved thermal design, optimized interconnects, and next-generation tensor cores to maximize efficiency. The push for sustainable AI systems created opportunities for TPU-based data centers to gain adoption among enterprises focused on ESG goals and reduced power consumption.

- For instance, Huawei’s Atlas 950 SuperCluster combines 64 SuperPoDs and 524,288 Ascend 950DT accelerators to deliver about 1 FP4 zettaFLOPS for inference and 524 FP8 exaFLOPS for training within one system.

Growth of Customizable TPU Instances

Flexible TPU configurations gained attention as enterprises sought tailored performance levels for specific workloads. This trend supported wider adoption among mid-sized businesses and research groups that required scalable yet cost-efficient compute. Cloud vendors offered adjustable memory sizes, core counts, and network capacity, enabling finer workload matching. This customization opportunity expanded the market by reducing barriers to adopting high-performance TPU compute environments.

Key Challenges

High Cost of Advanced AI Compute Infrastructure

Cloud TPU adoption faced challenges due to high compute costs, especially for large-scale training workloads. Complex AI models demanded extended training cycles, which increased spending for enterprises with limited budgets. Cost-sensitive sectors struggled to adopt TPU-powered systems at scale. Although cloud vendors introduced optimized pricing and shared clusters, overall cost barriers remained a major challenge for broader adoption in emerging markets and smaller organizations.

Technical Complexity and Integration Barriers

Enterprises often faced difficulty integrating cloud TPUs into existing AI pipelines due to specialized tooling, compatibility requirements, and limited in-house expertise. Migrating workloads from GPUs to TPUs required new development practices and updated model architectures. This complexity slowed adoption for teams lacking advanced machine learning engineering skills. Limited availability of TPU-optimized frameworks also created integration friction, making technical complexity a significant challenge for the market.

Regional Analysis

North America

North America held the largest share in 2024 with about 41% due to strong cloud adoption, advanced AI infrastructure, and heavy investment from major providers. The region benefited from rapid growth in generative AI, autonomous systems, and enterprise automation, which increased demand for high-performance TPU clusters. Technology companies, financial institutions, and healthcare networks expanded large-scale AI projects, strengthening regional leadership. Supportive innovation policies and a mature digital ecosystem further pushed TPU integration across industries.

Europe

Europe accounted for nearly 27% share in 2024 as enterprises adopted cloud-based AI solutions to support automation, smart manufacturing, and advanced data analytics. Increased regulatory focus on trustworthy AI encouraged investment in secure and efficient compute systems. Cloud vendors expanded TPU availability across major EU countries, enabling enterprise users to train complex models with improved compliance. Strong digitalization efforts in automotive, industrial equipment, and public services also supported wider TPU deployments across the region.

Asia Pacific

Asia Pacific captured about 24% share in 2024, supported by rapid growth in cloud spending, AI-driven consumer platforms, and large-scale digital transformation across enterprises. Strong demand from telecommunications, e-commerce, and financial services boosted TPU usage for training and inference workloads. Governments increased investment in AI research and hyperscale data centers, strengthening regional uptake. Expanding adoption of machine learning in manufacturing, robotics, and smart city projects positioned Asia Pacific as the fastest-growing regional market.

Latin America

Latin America held close to 5% share in 2024 as enterprises gradually adopted cloud-based AI solutions to improve analytics, automation, and digital services. TPU deployment increased in sectors such as banking, retail, and telecommunications, driven by rising demand for faster model processing. Cloud providers expanded regional data center presence, improving access to high-performance compute. Although adoption remained lower than major regions, increasing digital modernization and AI investment supported steady market growth.

Middle East & Africa

Middle East & Africa accounted for around 3% share in 2024, supported by emerging AI deployment in government, energy, banking, and urban development projects. Countries in the Gulf region invested in cloud infrastructure to accelerate national digital strategies and smart city initiatives. Growing demand for machine learning and predictive analytics encouraged early TPU adoption. Limited technical expertise and slower enterprise modernization moderated growth, but improving cloud availability continued to expand adoption across key industries.

Market Segmentations:

By Type

- Training-Oriented Cloud TPUs

- Inference-Optimized Cloud TPUs

- General-Purpose Cloud TPUs

- Customizable Cloud TPU Instances

By Application

- Machine Learning Training

- Inference

- High-Performance Computing

By Geography

- North America

- Europe

- Germany

- France

- U.K.

- Italy

- Spain

- Rest of Europe

- Asia Pacific

- China

- Japan

- India

- South Korea

- South-east Asia

- Rest of Asia Pacific

- Latin America

- Brazil

- Argentina

- Rest of Latin America

- Middle East & Africa

- GCC Countries

- South Africa

- Rest of the Middle East and Africa

Competitive Landscape

The competitive landscape in the Cloud Tensor Processing Unit Market features Amazon Web Services (AWS), Oracle Cloud Infrastructure, Microsoft Azure, Alibaba Cloud, Google Cloud Platform, and IBM Cloud in the first line, with rivalry centered on advanced AI compute capabilities and scalable cloud architectures. Providers focus on improving training throughput, lowering inference latency, and offering energy-efficient TPU alternatives to meet rising enterprise demand. Competition intensifies as vendors expand data center footprints, enhance interconnect speeds, and introduce flexible instance configurations. Strategic partnerships with AI software developers and enterprise clients help strengthen platform adoption. Continuous innovation in tensor processing architecture, optimized ML frameworks, and integrated development environments further shapes competition. Vendors also prioritize cost efficiency through usage-based pricing models and optimization tools, aiming to attract AI teams managing large and complex workloads. This combination of performance innovation, global expansion, and ecosystem integration defines the evolving market landscape.

Shape Your Report to Specific Countries or Regions & Enjoy 30% Off!

Key Player Analysis

Recent Developments

- In 2025, Google Cloud Platform unveiled Ironwood, its seventh-generation Tensor Processing Unit (TPU), at the Google Cloud Next conference

- In 2024, Amazon Web Services (AWS) announced details about the next-generation Trainium 3 AI training chip, expected to be four times more performant than its predecessor.

- In 2023, Microsoft Azure Launched new Azure Virtual Machines with NVIDIA H100 GPUs and developed custom silicon, including the Azure Maia AI accelerator chip.

Report Coverage

The research report offers an in-depth analysis based on Type, Application and Geography. It details leading market players, providing an overview of their business, product offerings, investments, revenue streams, and key applications. Additionally, the report includes insights into the competitive environment, SWOT analysis, current market trends, as well as the primary drivers and constraints. Furthermore, it discusses various factors that have driven market expansion in recent years. The report also explores market dynamics, regulatory scenarios, and technological advancements that are shaping the industry. It assesses the impact of external factors and global economic changes on market growth. Lastly, it provides strategic recommendations for new entrants and established companies to navigate the complexities of the market.

Future Outlook

- The market will grow as enterprises scale generative AI and large-model training.

- Cloud providers will expand TPU clusters to support faster and more efficient workloads.

- Demand for energy-efficient AI compute will push adoption of next-generation TPU designs.

- Customizable TPU instances will attract mid-sized businesses seeking flexible performance.

- Integration of TPUs into multimodal AI systems will accelerate across industries.

- Wider use of AI in automation will increase real-time inference workloads on TPUs.

- Hybrid cloud strategies will strengthen TPU adoption in regulated sectors.

- Advancements in tensor core architecture will reduce training time for complex models.

- Global data center expansion will improve regional access to TPU compute.

- Partnerships between cloud vendors and AI developers will drive optimized TPU ecosystems.